Distributed Training: Train BART/T5 for Summarization using 🤗 Transformers and Amazon SageMaker

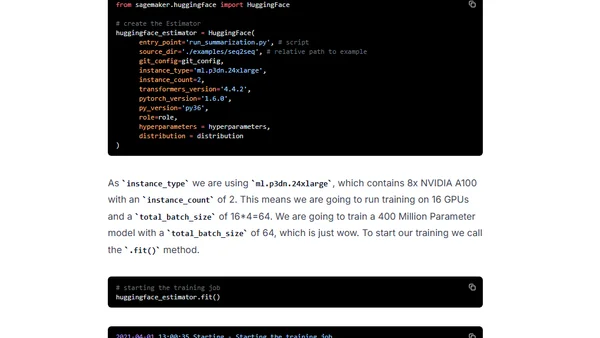

Read OriginalThis technical guide details how to perform distributed training of sequence-to-sequence models like BART and T5 for text summarization. It leverages the Hugging Face Transformers library, Amazon SageMaker's Data Parallelism, and the new Hugging Face Deep Learning Containers to accelerate model training and deployment.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser