Introduction to Data Engineering Concepts | Data Lakes Explained

Explains data lakes, their key characteristics, and how they differ from data warehouses in modern data architecture.

Alex Merced — Developer and technical writer sharing in-depth insights on data engineering, Apache Iceberg, data lakehouse architectures, Python tooling, and modern analytics platforms, with a strong focus on practical, hands-on learning.

388 articles from this blog

Explains data lakes, their key characteristics, and how they differ from data warehouses in modern data architecture.

Explores Apache Iceberg, Arrow, and Polaris—three key technologies powering modern, high-performance data lakehouse platforms.

Explains the data lakehouse architecture, a unified approach combining data lake scalability with warehouse management features like ACID transactions.

Explores the modern data stack, cloud platforms, and principles for building flexible, cloud-native data engineering architectures.

Explores how DevOps principles like CI/CD, infrastructure as code, and monitoring are applied to data engineering for reliable, scalable data pipelines.

Explores core principles of scalable data engineering, including parallelism, minimizing data movement, and designing adaptable pipelines for growing data volumes.

Explores workflow orchestration in data engineering, covering DAGs, tools, and best practices for managing complex data pipelines.

Explains the importance of data storage formats and compression for performance and cost in large-scale data engineering systems.

Explains core data engineering concepts: metadata, data lineage, and governance, and their importance for scalable, compliant data systems.

Explores the importance of data quality and validation in data engineering, covering key dimensions and tools for reliable pipelines.

An introduction to data warehousing concepts, covering architecture, components, and performance optimization for analytical workloads.

An introduction to data modeling concepts, covering OLTP vs OLAP systems, normalization, and common schema designs for data engineering.

Explains streaming data fundamentals, how streaming systems work, their use cases, and challenges compared to batch processing.

Explains batch processing fundamentals for data engineering, covering concepts, tools, and its ongoing relevance in data workflows.

Explains core data engineering concepts, comparing ETL and ELT data pipeline strategies and their use cases.

An introduction to data engineering concepts, focusing on data sources and ingestion strategies like batch vs. streaming.

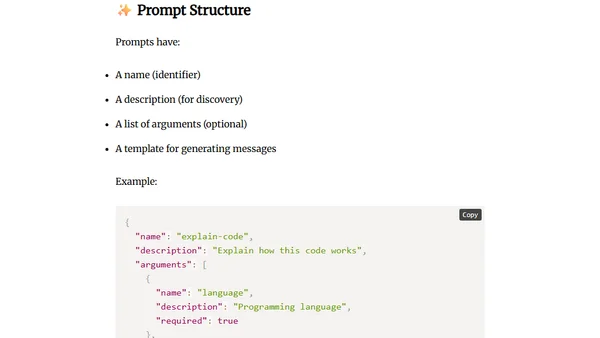

Explains how Sampling and Prompts in the Model Context Protocol (MCP) enable smarter, safer, and more controlled AI agent workflows.

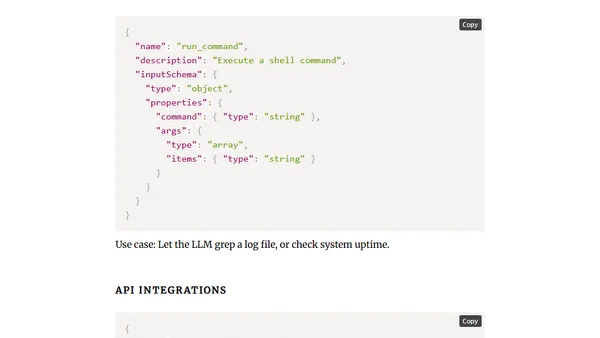

Explains how Tools in the Model Context Protocol (MCP) enable LLMs to execute actions like running commands or calling APIs, moving beyond just reading data.

Explains how the Model Context Protocol (MCP) uses 'Resources' to securely serve structured data from systems like files and databases to LLMs.

Explains the architecture of the Model Context Protocol (MCP), detailing its client-server model, core components, and message flow for connecting AI models to tools and data.