On Information Theoretic Bounds for SGD

Explores how mutual information and KL divergence can be used to derive information-theoretic generalization bounds for Stochastic Gradient Descent (SGD).

Explores how mutual information and KL divergence can be used to derive information-theoretic generalization bounds for Stochastic Gradient Descent (SGD).

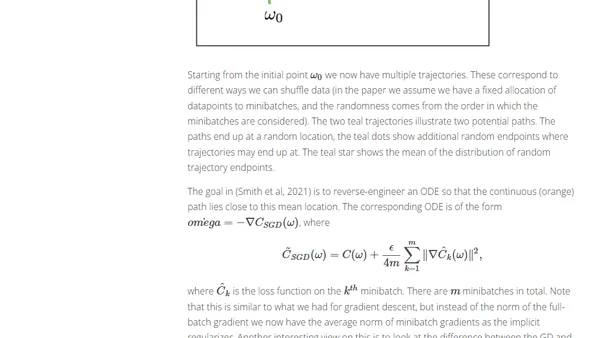

Explores how Stochastic Gradient Descent (SGD) inherently prefers certain minima, leading to better generalization in deep learning, beyond classical theory.

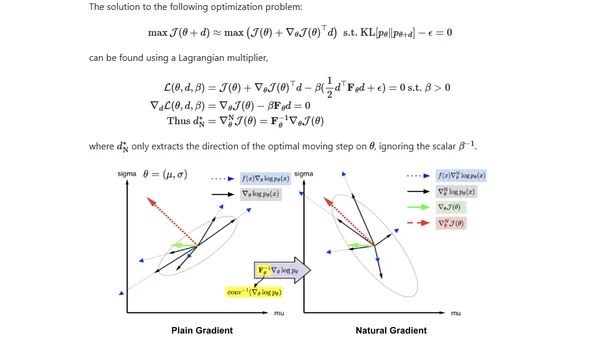

An introduction to Evolution Strategies (ES) as a black-box optimization alternative to gradient descent, with applications in deep reinforcement learning.