On Information Theoretic Bounds for SGD

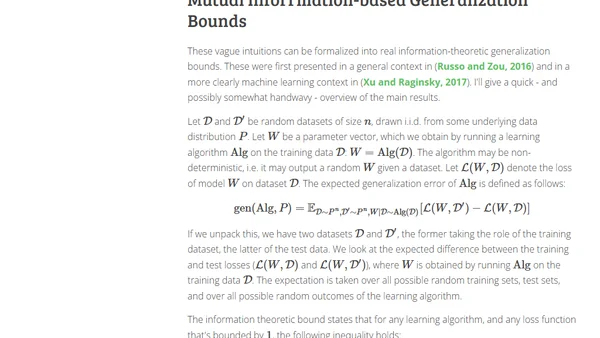

Read OriginalThis technical blog post discusses a theoretical approach to understanding the generalization of Stochastic Gradient Descent (SGD) using information theory. It explains a thought experiment linking the mutual information between model parameters and the training dataset to generalization performance, and outlines how KL divergences are used to derive formal bounds for SGD.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser