Book Review: Architects of Intelligence by Martin Ford

A review of 'Architects of Intelligence,' a book featuring interviews with 23 leading AI researchers and industry experts.

A review of 'Architects of Intelligence,' a book featuring interviews with 23 leading AI researchers and industry experts.

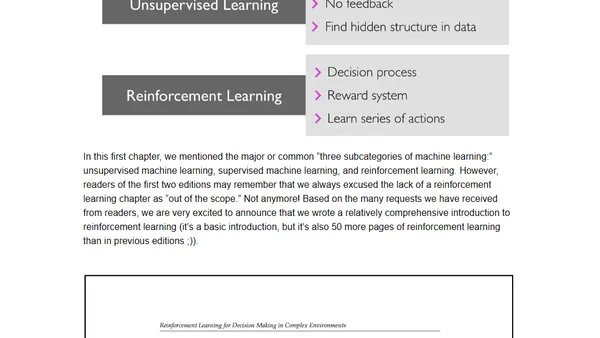

Author announces the 3rd edition of Python Machine Learning, featuring TensorFlow 2.0 updates and a new chapter on Generative Adversarial Networks.

Announcing the 3rd edition of Python Machine Learning, updated for TensorFlow 2.0 and featuring a new chapter on Generative Adversarial Networks (GANs).

Explores self-supervised learning, a method to train models on unlabeled data by creating supervised tasks, covering key concepts and models.

Explores the practical uses of reversible computing, from automatic differentiation in deep learning to distributed systems and database operations.

Explores the visual similarities between images generated by neural networks and human experiences in dreams or under psychedelics.

A blog post exploring the parallels and differences between human cognition and machine learning, including biases and inspirations.

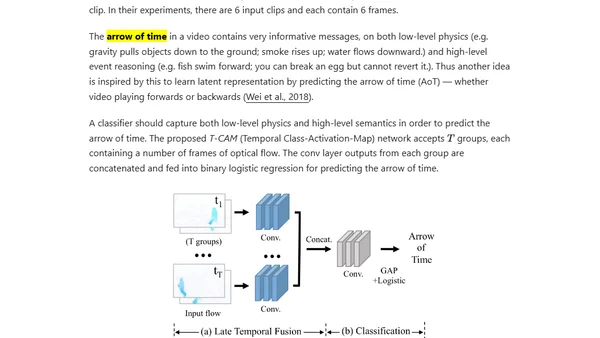

Explores efficient state representations for robots to accelerate Reinforcement Learning training, comparing pixel-based and model-based approaches.

A professor reflects on teaching new Machine Learning and Deep Learning courses at UW-Madison and showcases impressive student projects.

A professor reflects on teaching new Machine Learning and Deep Learning courses at UW-Madison and showcases student projects from those classes.

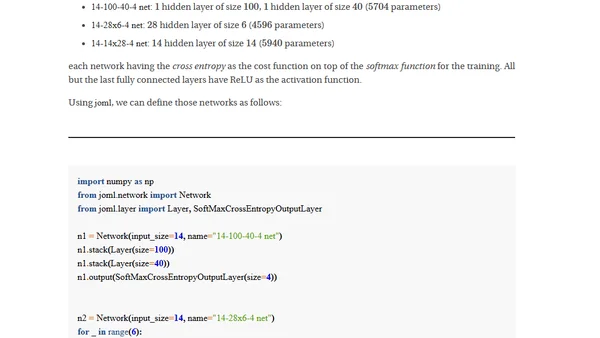

A deep dive into designing and implementing a Multilayer Perceptron from scratch, exploring the core concepts of neural network architecture and training.

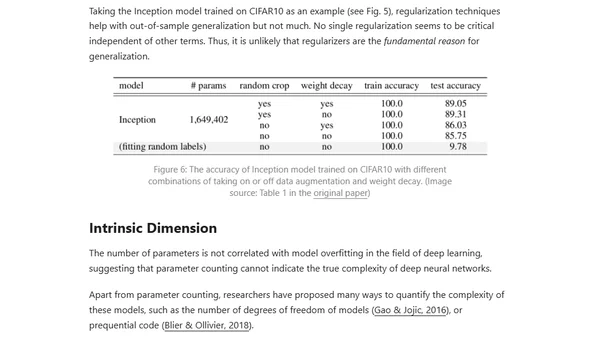

Explores the paradox of why deep neural networks generalize well despite having many parameters, discussing theories like Occam's Razor and the Lottery Ticket Hypothesis.

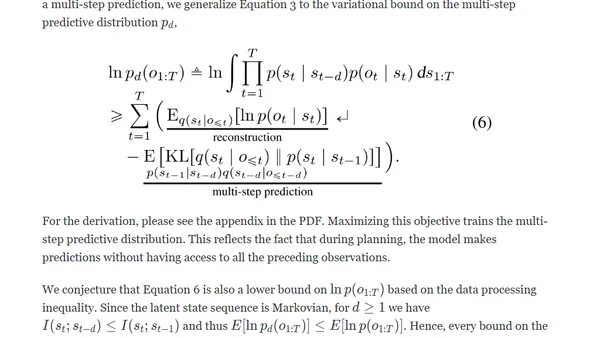

Introduces PlaNet, a model-based AI agent that learns environment dynamics from pixels and plans actions in latent space for efficient control tasks.

Explores handling Out-of-Vocabulary (OOV) values in machine learning, using deep learning for dynamic data in recommender systems as an example.

Explains how Graph Neural Networks and node2vec use graph structure and random walks to generate embeddings for machine learning tasks.

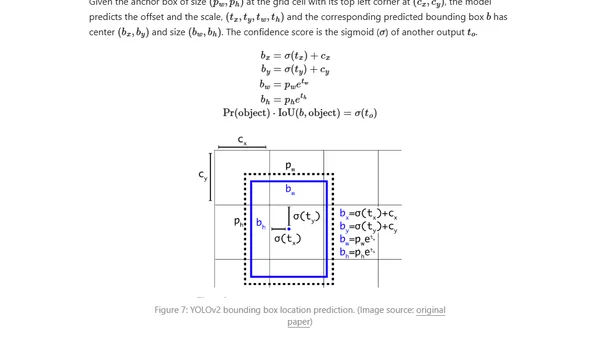

Explores fast, one-stage object detection models like YOLO, SSD, and RetinaNet, comparing them to slower two-stage R-CNN models.

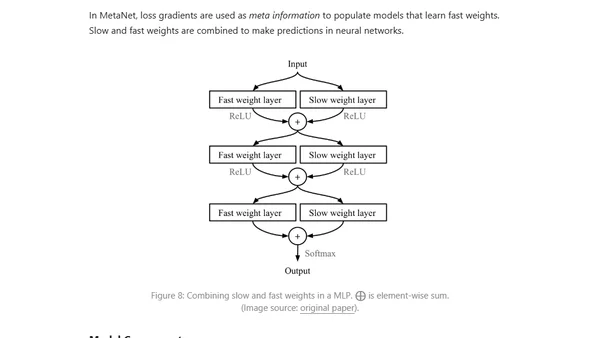

An introduction to meta-learning, a machine learning approach where models learn to adapt quickly to new tasks with minimal data, like 'learning to learn'.

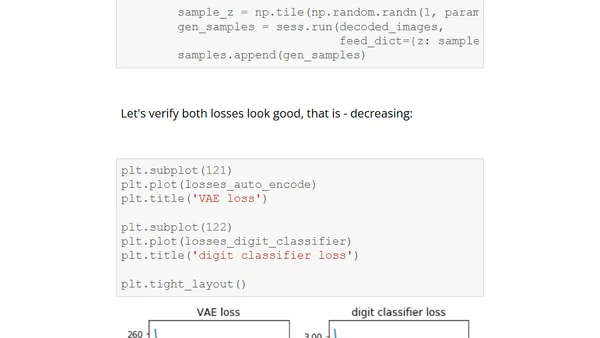

A detailed technical tutorial on implementing a Variational Autoencoder (VAE) with TensorFlow, including code and conditioning on digit types.

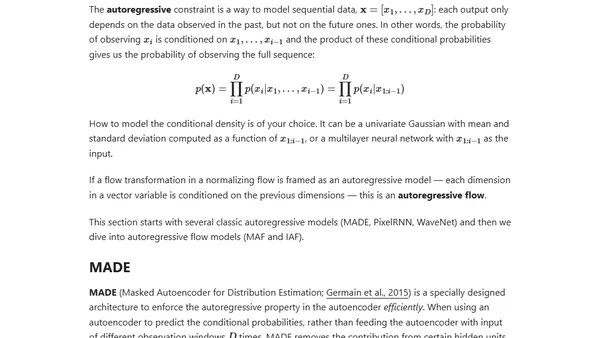

An introduction to flow-based deep generative models, explaining how they explicitly learn data distributions using normalizing flows, compared to GANs and VAEs.

An overview of tools and techniques for creating clear and insightful diagrams to visualize complex neural network architectures.