TIL: Masked Language Models Are Surprisingly Capable Zero-Shot Learners

Explores using a masked language model's head for zero-shot tasks, achieving strong results without task-specific heads.

Explores using a masked language model's head for zero-shot tasks, achieving strong results without task-specific heads.

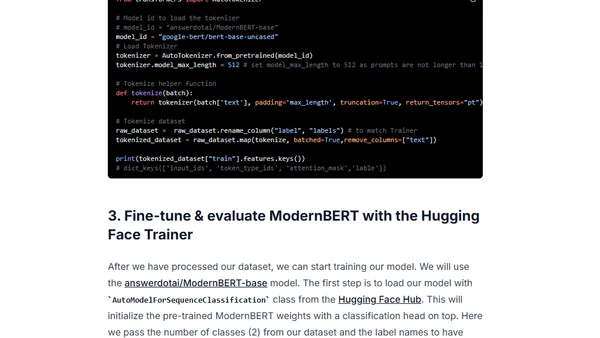

A tutorial on fine-tuning the ModernBERT model for classification tasks to build an efficient LLM router, covering setup, training, and evaluation.

Introducing ModernBERT, a new family of state-of-the-art encoder models designed as a faster, more efficient replacement for the widely-used BERT.