The Ihaka Lectures 3: Rise of the Machine Learners

Announcement for a lecture series on machine learning, covering topics like Weka, deep learning, algorithmic fairness, and sparse supervised learning.

Announcement for a lecture series on machine learning, covering topics like Weka, deep learning, algorithmic fairness, and sparse supervised learning.

A researcher's 2018 highlights: using machine learning for cognitive brain mapping, analyzing non-curated data, and contributing to scikit-learn development.

A guide on building a personal brand as a data scientist, covering path selection, blogging, and sharing knowledge within the community.

A review and tips for the challenging OMSCS CS6601 Artificial Intelligence course, covering its content, workload, and personal experience.

A data scientist details how a flawed train-test split method introduced bias when adding image thumbnails to a content recommendation model.

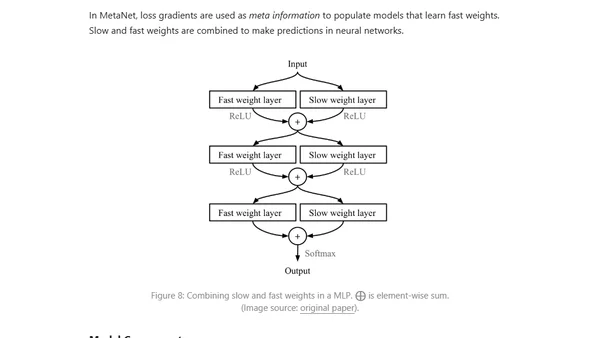

An introduction to meta-learning, a machine learning approach where models learn to adapt quickly to new tasks with minimal data, like 'learning to learn'.

Overview of new features, changes, and fixes in PHP-ML 0.7.0, a machine learning library for PHP developers.

Explores the 3D framework (Decomposition, Data, Deployment) for designing and deploying effective machine learning systems in business contexts.

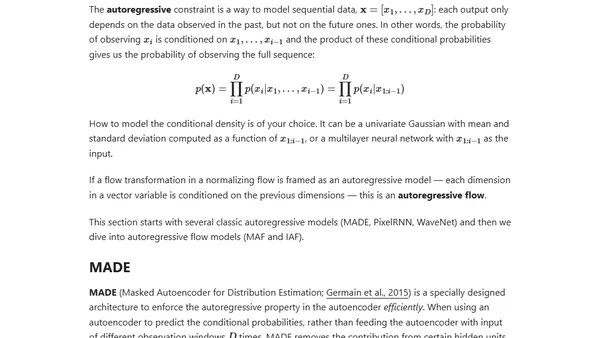

An introduction to flow-based deep generative models, explaining how they explicitly learn data distributions using normalizing flows, compared to GANs and VAEs.

Inria establishes a foundation to secure funding and support for the scikit-learn open-source machine learning library, enabling sustainable growth and development.

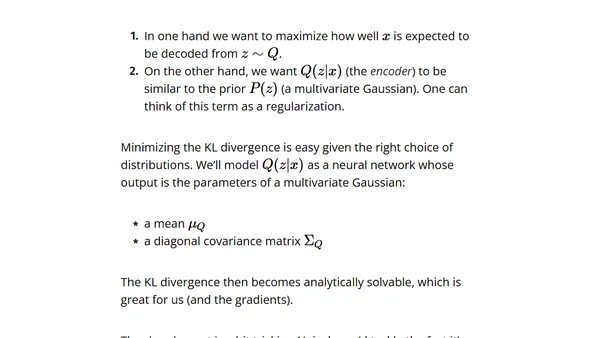

A technical explanation of Variational Autoencoders (VAEs), covering their theory, latent space, and how they generate new data.

Explains four levels of customer targeting, from no segmentation to advanced recommendation systems, and their business applications.

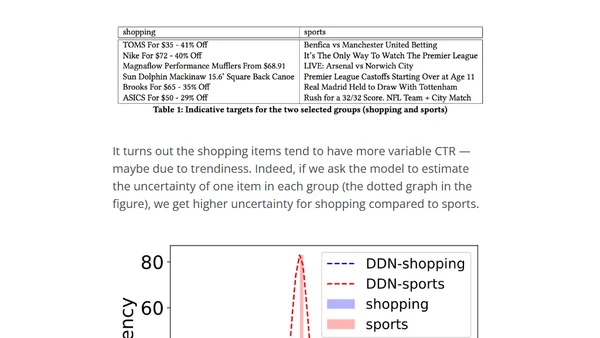

Explains how Taboola built a unified neural network model to predict CTR and estimate prediction uncertainty for recommender systems.

A report on recent scikit-learn sprints in Austin and Paris, highlighting new features, bug fixes, and progress toward the 0.20 release.

Explores how uncertainty estimation in deep neural networks can be used for model interpretation, debugging, and improving reliability in high-risk applications.

A review and tips for Georgia Tech's OMSCS CS7642 Reinforcement Learning course, covering workload, projects, and key learnings.

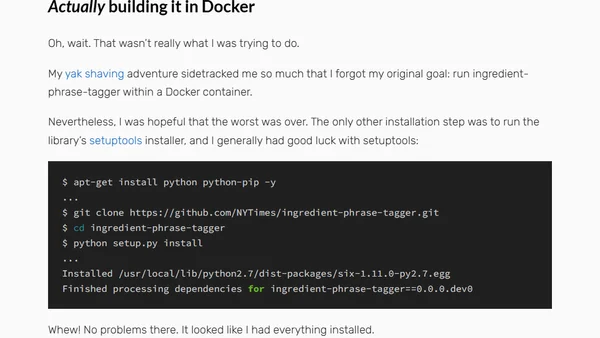

A developer recounts the process of reviving a deprecated open-source Python library for parsing recipe ingredients, detailing the challenges of legacy code.

Explores GPU-based data science workflows using MapD (now OmniSci) for high-performance analytics and machine learning without data transfer bottlenecks.

Explains the attention mechanism in deep learning, its motivation from human perception, and its role in improving seq2seq models like Transformers.

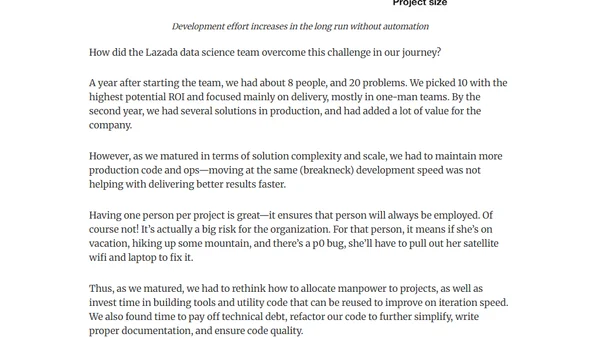

A data science leader shares challenges of scaling a data science team at Lazada, focusing on balancing business input with ML automation.