Expert Beginners and Lone Wolves will dominate this early LLM era

A technical architect explores using local LLMs as junior developers for a blog migration, analyzing their strengths and common pitfalls.

A technical architect explores using local LLMs as junior developers for a blog migration, analyzing their strengths and common pitfalls.

Guide to running OpenAI's Codex CLI with a self-hosted LLM on an NVIDIA DGX Spark via Tailscale for remote coding assistance.

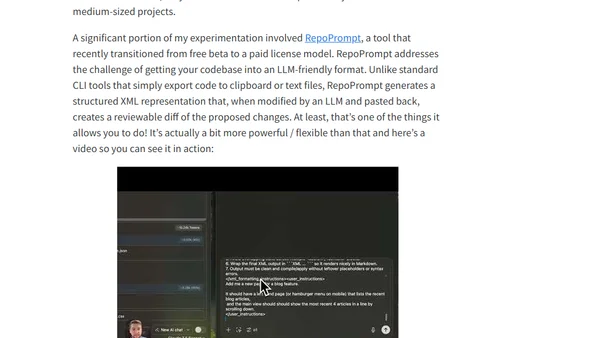

A developer shares insights and practical tips from a week of experimenting with local LLMs, including model recommendations and iterative improvement patterns.