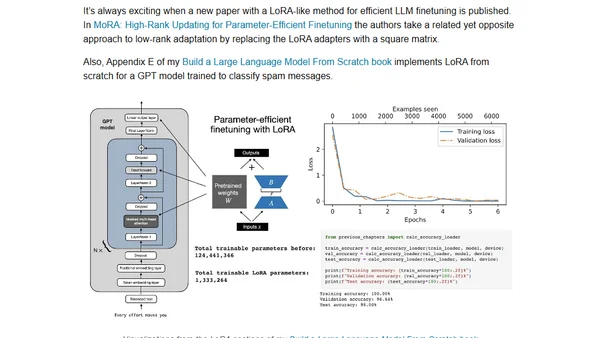

LLM Research Insights: Instruction Masking and New LoRA Finetuning Experiments?

Explores new research on instruction masking and LoRA finetuning techniques for improving large language models (LLMs).

Explores new research on instruction masking and LoRA finetuning techniques for improving large language models (LLMs).

Analyzes how LLMs and AI are making technical interviews harder, leading to more complex coding questions and increased cheating, and proposes work sample tests as a better alternative.

A summary of a talk on applying Large Language Models (LLMs) to build and deploy recommendation systems at scale, presented at Netflix's PRS workshop.

Analyzing if AI can replace humans using computational theory, comparing countable vs. uncountable problems and AI's inherent limitations.

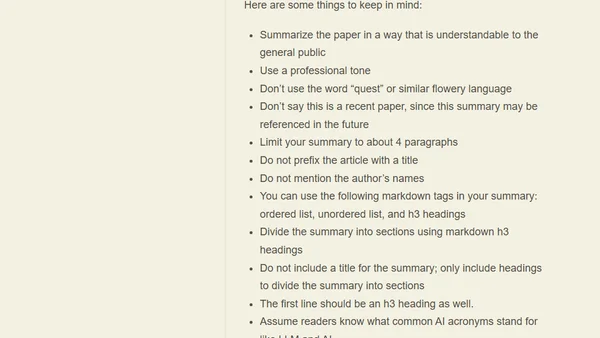

Practical lessons from integrating LLMs into a product, focusing on prompt design pitfalls like over-specification and handling null responses.

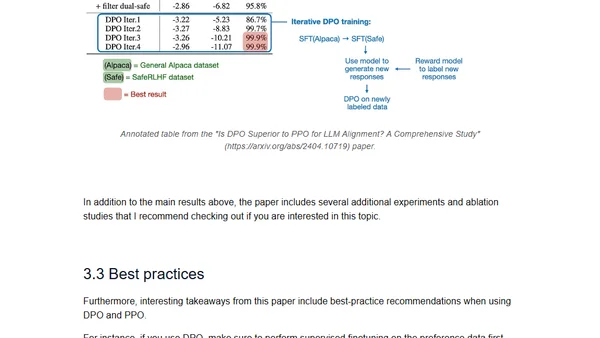

A technical review of April 2024's major open LLM releases (Mixtral, Llama 3, Phi-3, OpenELM) and a comparison of DPO vs PPO for LLM alignment.

A review and comparison of the latest open LLMs (Mixtral, Llama 3, Phi-3, OpenELM) and a study on DPO vs. PPO for LLM alignment.

Argues against using LLMs to generate SQL queries for novel business questions, highlighting the importance of human analysts for precision.

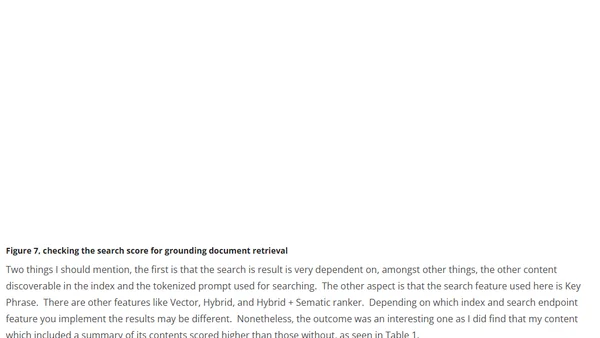

A technical guide on using Azure AI Language Studio to summarize and optimize grounding documents for improving RAG-based AI solutions.

Practical tips for writing technical documentation that is optimized for LLM question-answering tools, improving developer experience.

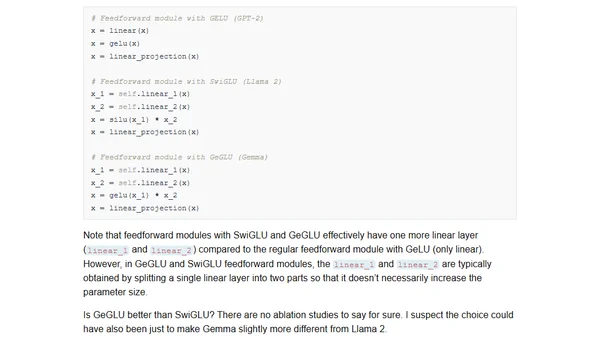

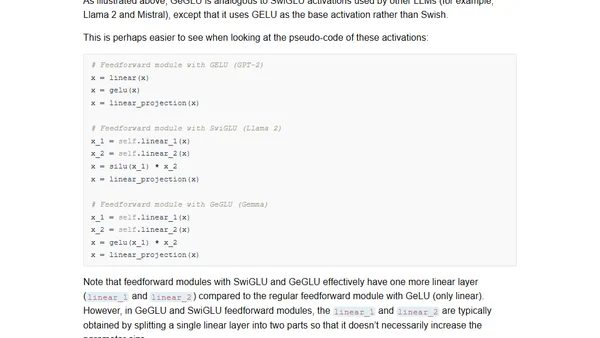

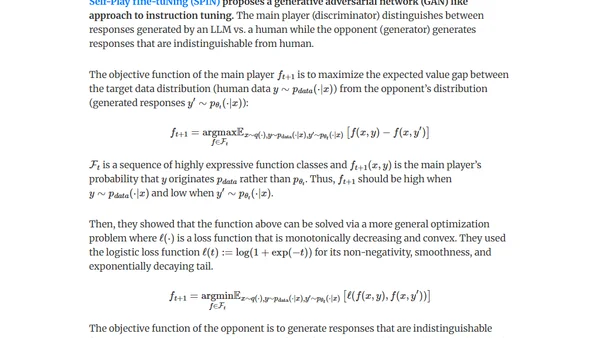

A summary of key AI research papers from February 2024, focusing on new open-source LLMs, small fine-tuned models, and efficient fine-tuning techniques.

A summary of February 2024 AI research, covering new open-source LLMs like OLMo and Gemma, and a study on small, fine-tuned models for text summarization.

Explores the gap between generative AI's perceived quality in open-ended play and its practical effectiveness for specific, goal-oriented tasks.

A developer's critical reflection on GitHub Copilot's impact, questioning if its AI assistance is creating accessibility and quality divides in software development.

Explores methods for generating synthetic data (distillation & self-improvement) to fine-tune LLMs for pretraining, instruction-tuning, and preference-tuning.

A guide on running a Large Language Model (LLM) locally using Ollama for privacy and offline use, covering setup and performance tips.

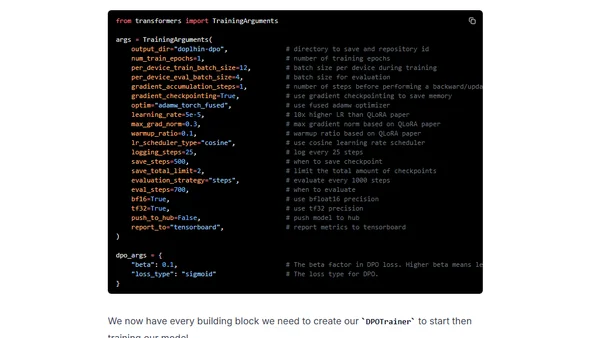

A technical guide on using Direct Preference Optimization (DPO) with Hugging Face's TRL library to align and improve open-source large language models in 2024.

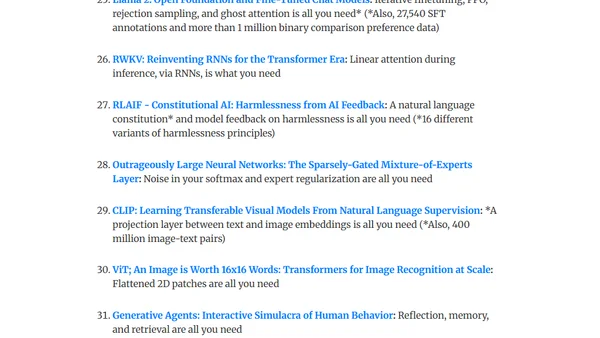

A curated reading list of fundamental language modeling papers with summaries, designed to help start a weekly paper club for learning and discussion.

Investigates why ChatGPT 3.5 API sometimes refuses to summarize arXiv papers, exploring prompts, content, and model behavior.

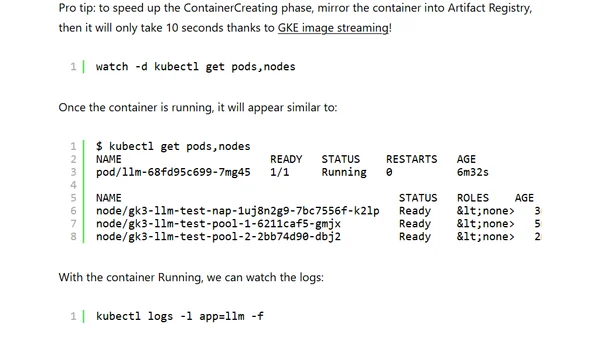

A guide to deploying and running your own LLM on Google Kubernetes Engine (GKE) Autopilot for control, privacy, and cost management.