Optimizing Transformers for GPUs with Optimum

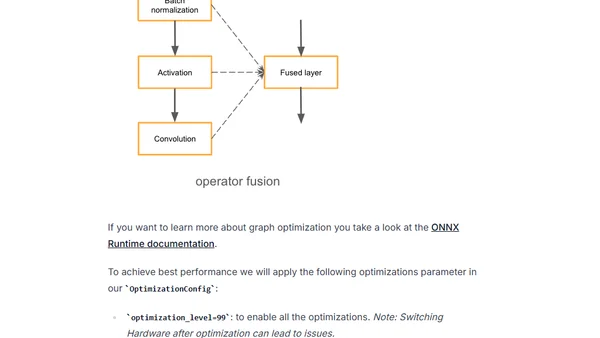

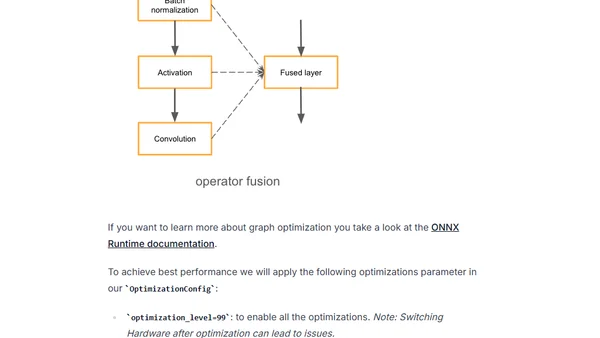

Learn to optimize Hugging Face Transformers models for GPU inference using Optimum and ONNX Runtime to reduce latency.

Learn to optimize Hugging Face Transformers models for GPU inference using Optimum and ONNX Runtime to reduce latency.

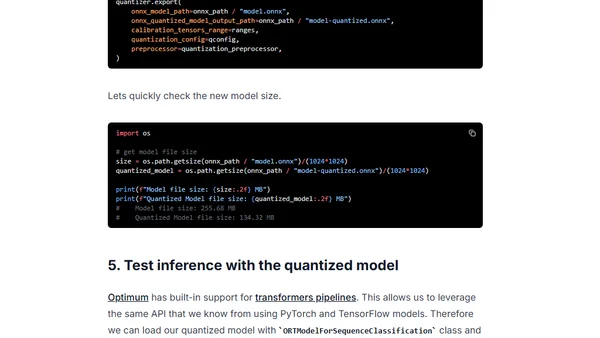

Learn how to use Hugging Face Optimum and ONNX Runtime to apply static quantization to a DistilBERT model, achieving ~3x latency improvements.

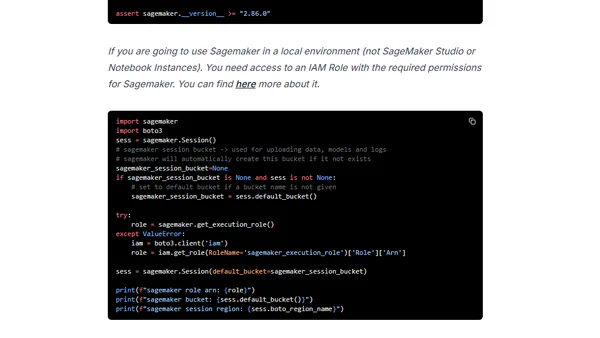

A guide to deploying Hugging Face's DistilBERT model for serverless inference using Amazon SageMaker, including setup and deployment steps.