Variational Autoencoders Explained

A technical explanation of Variational Autoencoders (VAEs), covering their theory, latent space, and how they generate new data.

A technical explanation of Variational Autoencoders (VAEs), covering their theory, latent space, and how they generate new data.

Explores the evolution from basic Autoencoders to Beta-VAE, covering their architecture, mathematical notation, and applications in dimensionality reduction.

Explores Bayesian methods for quantifying uncertainty in deep neural networks, moving beyond single-point weight estimates.

A review and tips for Georgia Tech's OMSCS CS7642 Reinforcement Learning course, covering workload, projects, and key learnings.

Explains the attention mechanism in deep learning, its motivation from human perception, and its role in improving seq2seq models like Transformers.

Highlights from a deep learning conference covering optimization algorithms' impact on generalization and human-in-the-loop efficiency.

A practical guide to implementing a hyperparameter tuning script for machine learning models, based on real-world experience from Taboola's engineering team.

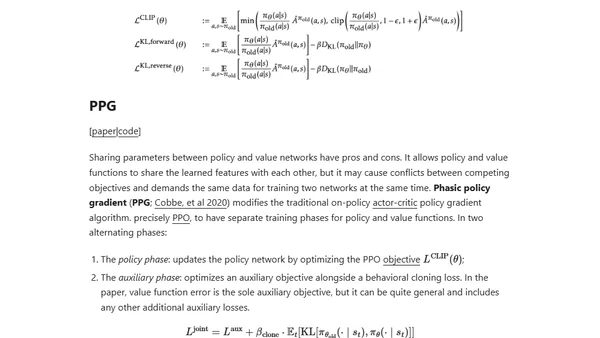

A comprehensive overview of policy gradient algorithms in reinforcement learning, covering key concepts, notations, and various methods.

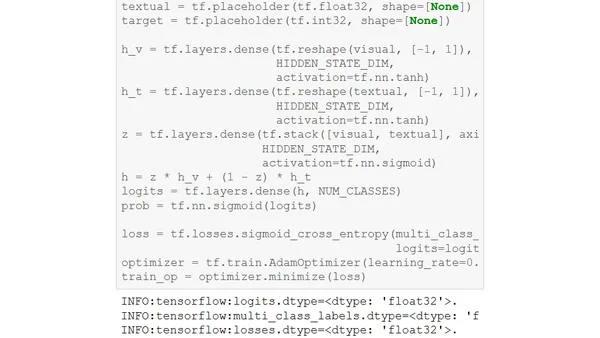

Explains the Gated Multimodal Unit (GMU), a deep learning architecture for intelligently fusing data from different sources like images and text.

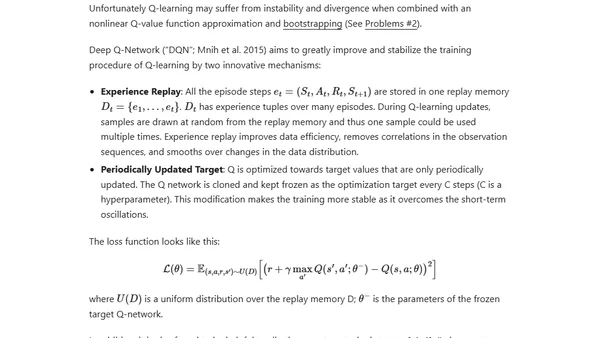

An introductory guide to Reinforcement Learning (RL), covering key concepts, algorithms like SARSA and Q-learning, and its role in AI breakthroughs.

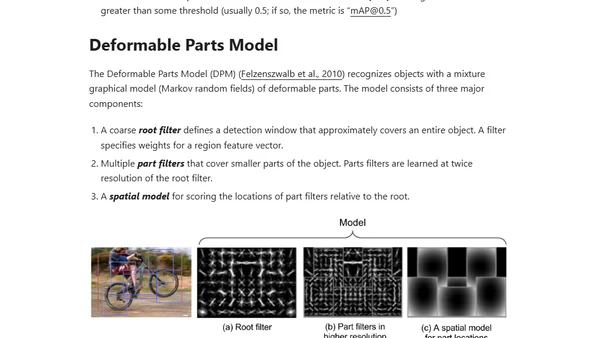

Explores the R-CNN family of models for object detection, covering R-CNN, Fast R-CNN, Faster R-CNN, and Mask R-CNN with technical details.

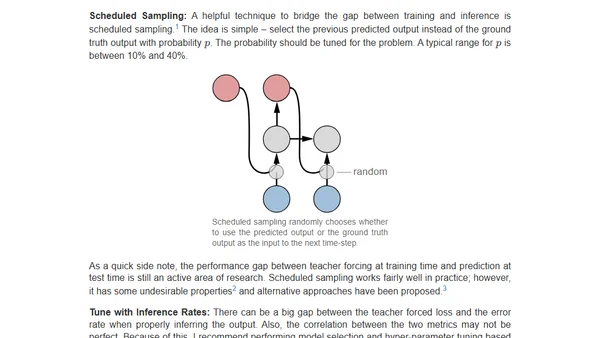

Practical tips for training sequence-to-sequence models with attention, focusing on debugging and ensuring the model learns to condition on input.

Explores classic CNN architectures for image classification, including AlexNet, VGG, and ResNet, as foundational models for object detection.

A retrospective on the transformative impact of deep learning over the past five years, covering its rise, key applications, and future potential.

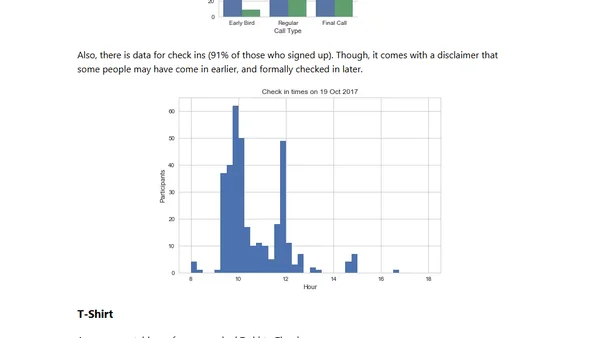

A recap of PyData Warsaw 2017, covering key talks, new package announcements, and analytics on the conference's international attendees.

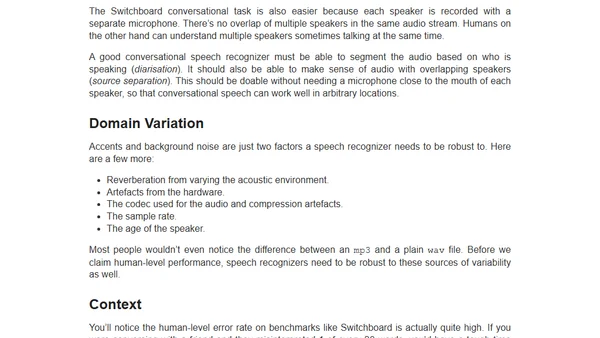

Argues that speech recognition hasn't reached human-level performance, highlighting persistent challenges with accents, noise, and semantic errors.

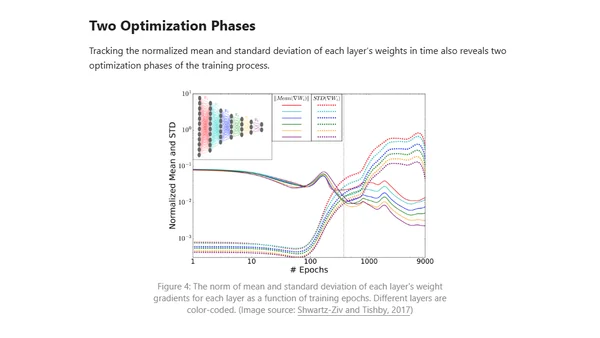

Explores applying information theory, specifically the Information Bottleneck method, to analyze training phases and learning bounds in deep neural networks.

Explains the math behind GANs, their training challenges, and introduces WGAN as a solution for improved stability.

A comparison of PyTorch and TensorFlow deep learning frameworks, focusing on programmability, flexibility, and ease of use for different project scales.

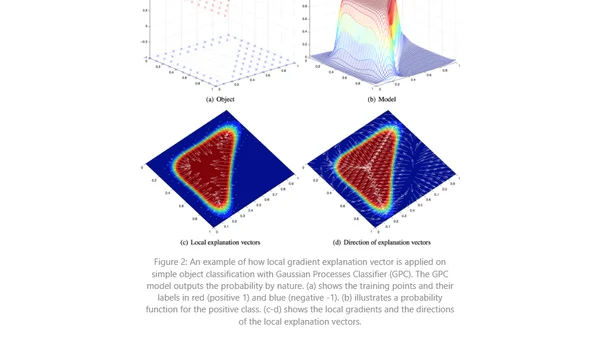

Explores the importance of interpreting ML model predictions, especially in regulated fields, and reviews methods like linear regression and interpretable models.