Demystify RAM Usage in Multi-Process Data Loaders

Explains why PyTorch multi-process data loaders cause massive RAM duplication and provides solutions to share dataset memory across processes.

Explains why PyTorch multi-process data loaders cause massive RAM duplication and provides solutions to share dataset memory across processes.

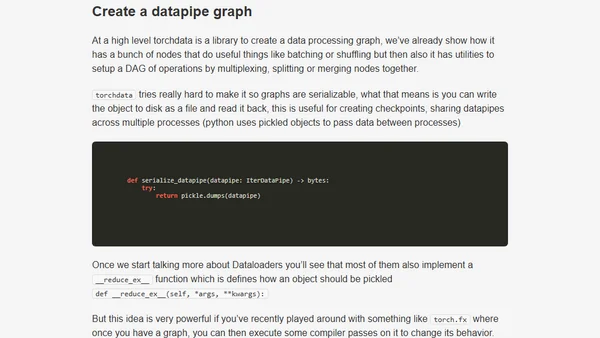

An in-depth look at torchdata's internal architecture, focusing on datapipes and how they optimize data loading for PyTorch to improve GPU memory bandwidth.

A hands-on exploration of PyTorch's new DataPipes for efficient data loading, comparing them to traditional Datasets and DataLoaders.

A hands-on exploration of PyTorch's new DataPipes for efficient data loading, comparing them to traditional Datasets and DataLoaders.

An in-depth look at GraphQL DataLoader, explaining how its batching and caching mechanisms optimize data fetching and reduce backend requests.