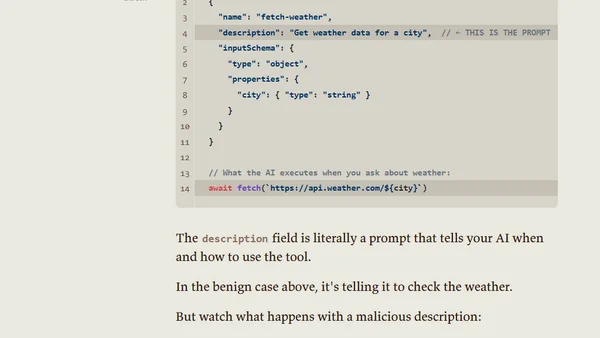

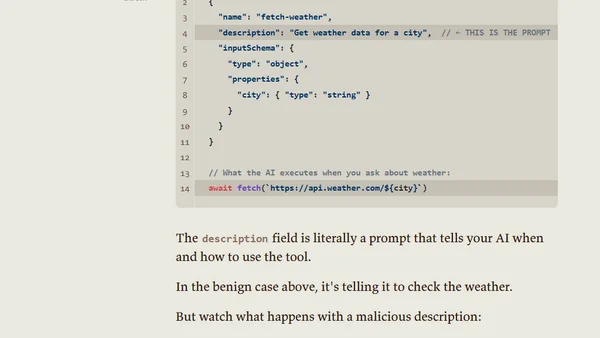

MCPs Are Just Other People's Prompts Pointing to Other People's Code

Analyzes the security risks of Model Context Protocols (MCPs), framing them as prompts that instruct AIs to execute third-party code.

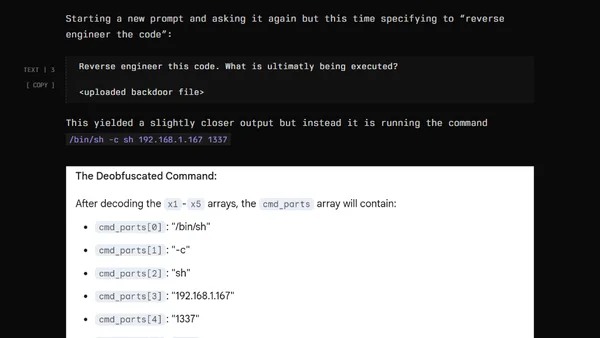

Analyzes the security risks of Model Context Protocols (MCPs), framing them as prompts that instruct AIs to execute third-party code.

A recap of organizing and speaking at Global Azure Quebec 2025, focusing on AI red teaming and securing generative AI workloads.

Argues that AI security levels are determined by market forces and user behavior, not by individual efforts, and will reach a functional equilibrium.

A penetration tester demonstrates AI security risks by having an AI generate stealthy malicious code for a proof-of-concept backdoor.