No, We Don't Have to Choose Batch Sizes As Powers Of 2

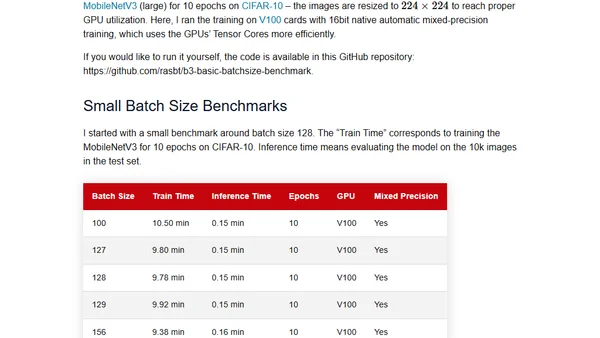

Read OriginalThis article critically examines the long-held convention of using batch sizes that are powers of 2 for neural network training. It explores the theoretical justifications, such as memory alignment and GPU efficiency with Tensor Cores, and questions whether these benefits hold up in practical, real-world scenarios.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser