Understanding and Coding the Self-Attention Mechanism of Large Language Models From Scratch

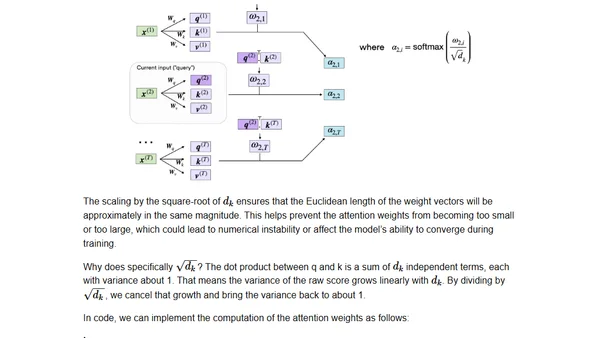

Read OriginalThis article provides a detailed, step-by-step tutorial on implementing the scaled-dot product self-attention mechanism from the original transformer paper. It explains the concept's importance in NLP and deep learning, then walks through coding it from the ground up, starting with embedding an input sentence.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser