No, We Don't Have to Choose Batch Sizes As Powers Of 2

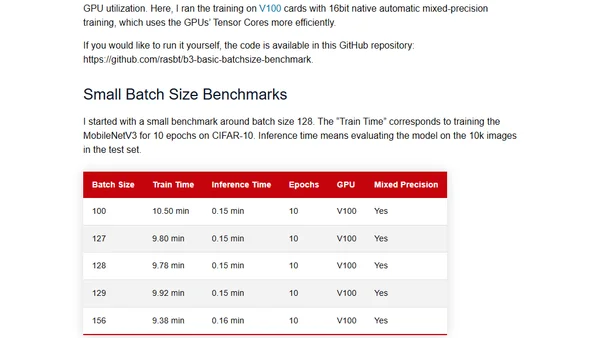

Read OriginalThis technical article critically analyzes the deep learning convention of setting batch sizes to powers of 2 (e.g., 64, 128). It explores the theoretical justifications, such as memory alignment and GPU efficiency for matrix operations, and discusses whether this practice is always necessary or beneficial in practical training scenarios.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser