An Intuition for Attention

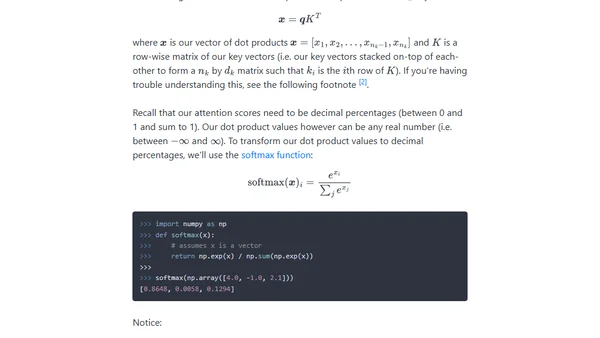

Read OriginalThis article provides a foundational, intuitive breakdown of the attention mechanism used in transformer models like ChatGPT. It starts with the concept of a key-value lookup, progresses to matching based on meaning using weighted sums, and introduces how word vectors determine attention scores, ultimately leading to the formal attention equation.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser