We need an evolved robots.txt and regulations to enforce it

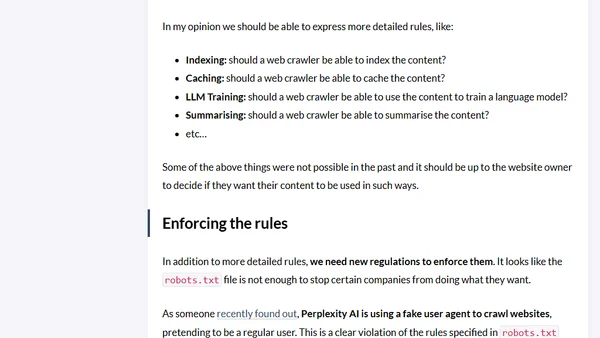

Read OriginalThis article critiques the traditional robots.txt standard as insufficient for the AI era. It proposes extending the file to specify permissions for indexing, caching, LLM training, and content summarization. It calls for new regulations to enforce these rules, using Perplexity AI's alleged use of a fake user agent to bypass robots.txt as a key example of why enforcement is needed.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser