The Accessibility of GPT-2 - Text Generation and Fine-tuning

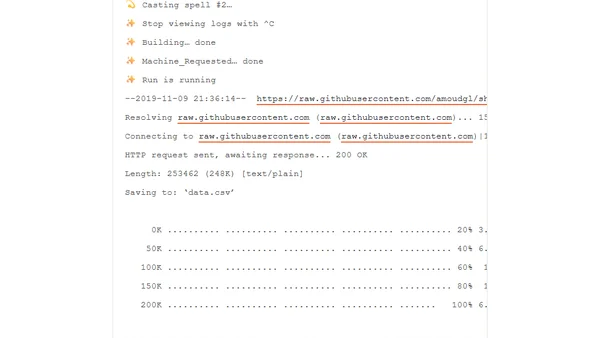

Read OriginalThis article provides a technical tutorial on leveraging the HuggingFace API to work with OpenAI's GPT-2 transformer model. It explains the basics of Natural Language Generation (NLG), details the process of text generation and fine-tuning the model on custom datasets, and mentions using platforms like Spell to manage compute resources.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser