Beyond Standard LLMs

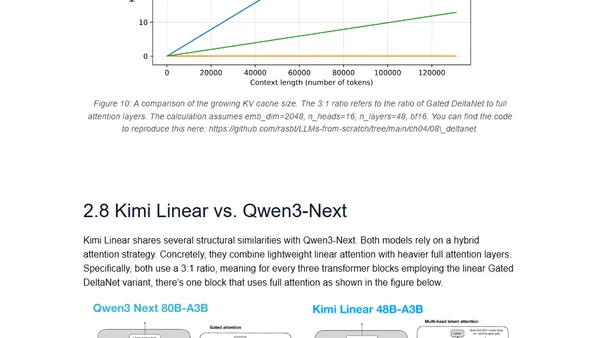

Read OriginalThis article explores emerging alternatives to standard autoregressive transformer LLMs, covering linear attention hybrids like Qwen3-Next and Kimi, text diffusion models, code world models, and small recursive transformers. It compares their efficiency and performance improvements over traditional architectures, serving as an introduction to the evolving LLM landscape beyond conventional transformer designs.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser