Improving LoRA: Implementing Weight-Decomposed Low-Rank Adaptation (DoRA) from Scratch

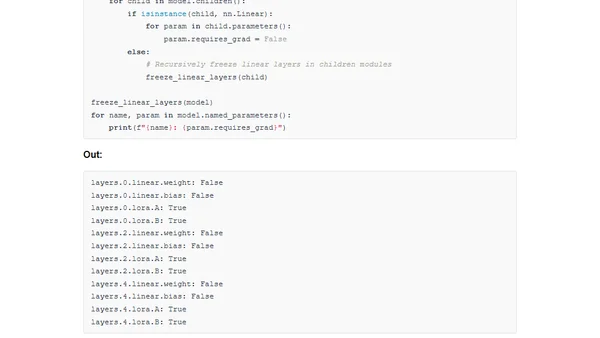

Read OriginalThis article provides a detailed, hands-on tutorial on implementing Weight-Decomposed Low-Rank Adaptation (DoRA), a recently proposed enhancement to the popular LoRA technique for efficiently fine-tuning large models like LLMs. It explains the core concepts of LoRA, compares it to DoRA, and walks through a from-scratch PyTorch implementation to demonstrate the improved method's mechanics and potential performance gains.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser