Implementing A Byte Pair Encoding (BPE) Tokenizer From Scratch

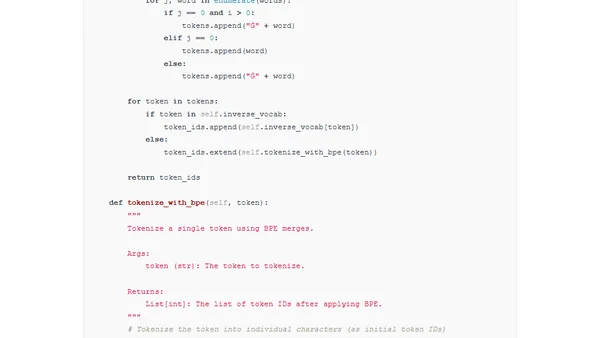

Read OriginalThis article provides a detailed, educational implementation of the Byte Pair Encoding (BPE) tokenization algorithm from scratch. It explains the core concepts of BPE, used in models like GPT-2 through GPT-4 and Llama 3, and contrasts the educational implementation with production libraries like OpenAI's tiktoken. The content covers converting text to bytes, the motivation for subword tokenization, and includes code for training a tokenizer.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser