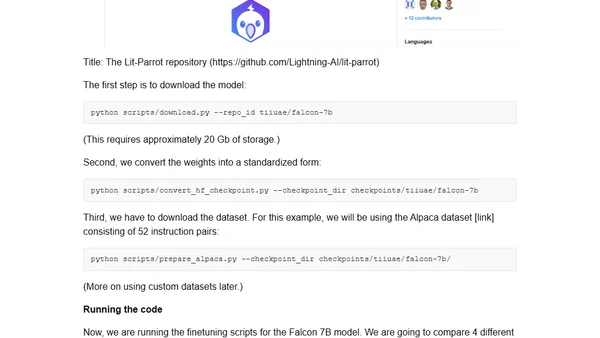

Finetuning Falcon LLMs More Efficiently With LoRA and Adapters

Read OriginalThis technical article compares parameter-efficient finetuning methods, specifically LoRA and adapters, for the Falcon open-source LLM. It explains how these techniques enable finetuning in just one hour on a single GPU versus days on multiple GPUs, covering the benefits of customizing open-source models for specific tasks and domains.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser