Creating Confidence Intervals for Machine Learning Classifiers

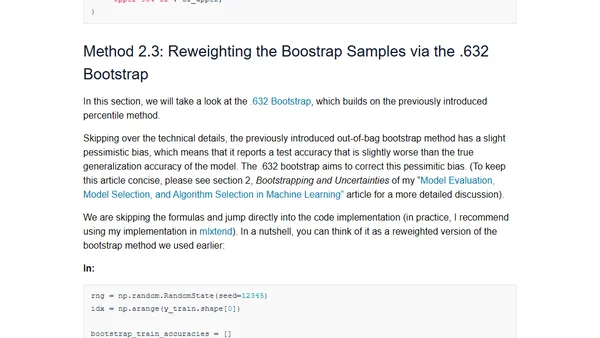

Read OriginalThis article provides a practical overview of several methods for constructing confidence intervals to evaluate the performance and uncertainty of machine learning and deep learning classifiers. It covers techniques like normal approximation intervals, various bootstrapping approaches on training and test sets, and intervals from model retraining, aiming to improve research reporting and model comparison.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser