Running Python on a serverless GPU instance for machine learning inference

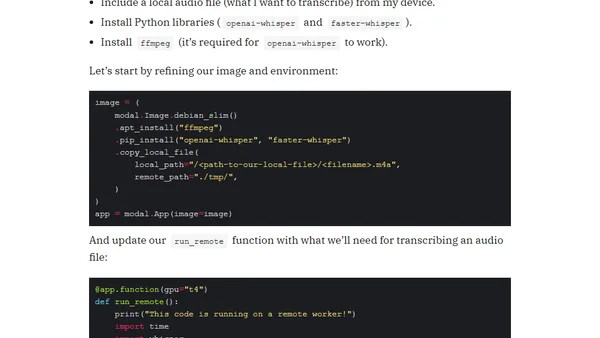

Read OriginalThis technical article details the process of using Modal.com to run Python code on serverless Nvidia T4 GPU instances for machine learning inference. It compares performance with CPU-based AWS Lambda, provides a step-by-step setup guide, and includes a practical example for running a speech-to-text transcription model to significantly reduce processing time.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser