Task-specific knowledge distillation for BERT using Transformers and Amazon SageMaker

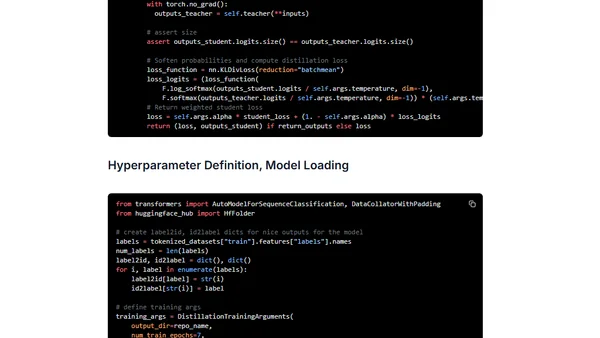

Read OriginalThis technical guide provides an end-to-end example of task-specific knowledge distillation for text classification. It demonstrates how to train a compact BERT-Tiny student model to mimic a larger BERT-base teacher model using the SST-2 dataset, PyTorch, Hugging Face Transformers, and Amazon SageMaker.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser