Scale LLM Inference on Amazon SageMaker with Multi-Replica Endpoints

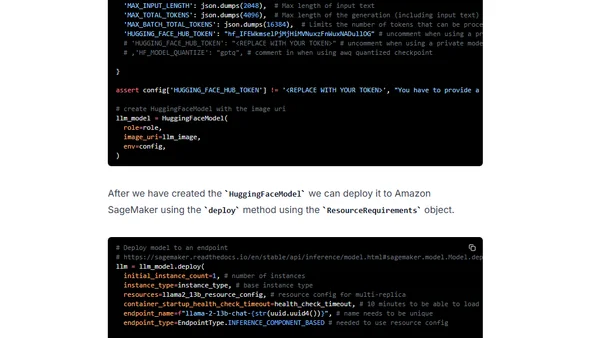

Read OriginalThis technical tutorial explains how to use Amazon SageMaker's new Hardware Requirements object to deploy multiple replicas of a Large Language Model (LLM), like Llama 13B, on a single instance (e.g., p4d.24xlarge). It covers setup with the SageMaker SDK, configuring the Hugging Face LLM DLC, and deploying models to optimize inference performance and cost.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser