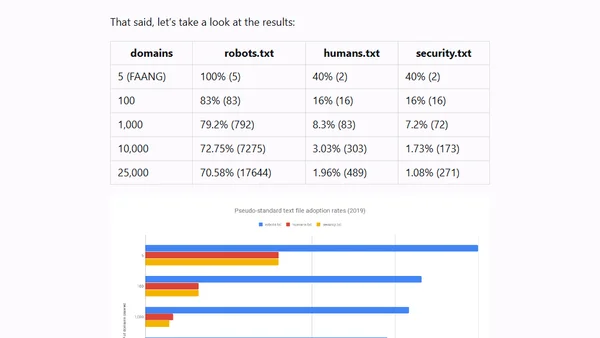

Pseudo-standard Text File Adoption Rates (2019)

Read OriginalA 2019 technical analysis measuring the adoption of pseudo-standard text files (robots.txt, humans.txt, and security.txt) across popular websites. The article details the Node.js-based crawling methodology, data collection from Alexa top sites, challenges like memory limits and redirects, and presents the findings on how many sites implement these files.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser