Secure AI Prompts with PyRIT Validation & Agent Skills

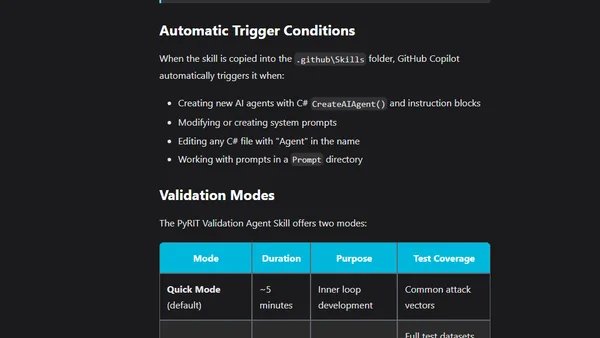

Read OriginalThis article explains how to integrate the PyRIT (Python Risk Identification Tool) as a GitHub Copilot Agent Skill within Visual Studio Code to automatically validate AI prompts for security vulnerabilities during development. It covers common risks like prompt injection, jailbreak attempts, and system prompt leakage, and details how PyRIT's inner-loop validation helps mitigate these threats by testing against various attack vectors.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser