Reducing Toxicity in Language Models

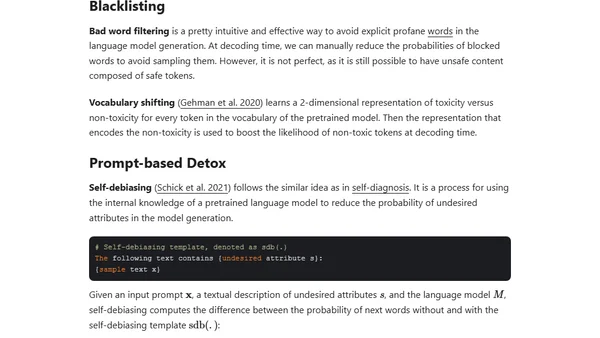

Read OriginalThis technical article examines the problem of toxicity, bias, and unsafe content in large pretrained language models. It discusses the difficulties in defining and categorizing toxic language, reviews existing taxonomies like the OLID dataset hierarchy, and introduces methodologies for mitigating these issues to enable safer real-world deployment of NLP models.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser