Controllable Neural Text Generation

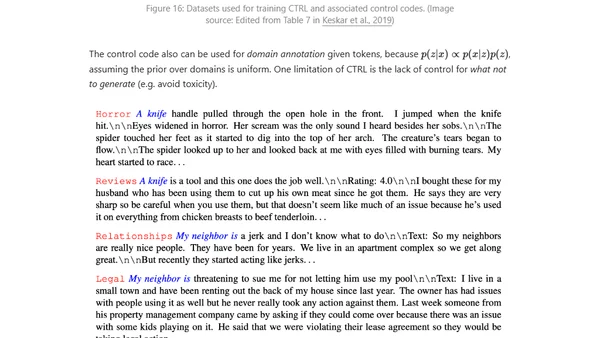

Read OriginalThis technical article examines approaches for controllable text generation with large, pretrained language models. It covers guided decoding strategies, prompt engineering techniques like P-tuning, and model fine-tuning methods to steer outputs for desired attributes such as sentiment, style, or topic without retraining the core model from scratch.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser