How to setup secure access to your local LLM UI

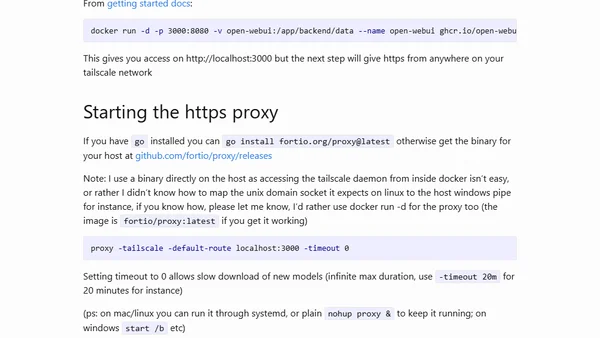

Read OriginalThis article provides a step-by-step tutorial for developers to securely expose a locally hosted LLM (like Open WebUI) over the internet. It details using Tailscale for the network layer and a Fortio proxy written in Go to enable HTTPS access, covering prerequisites like Docker and GPU requirements.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser