Numerically Stable Softmax and Cross Entropy

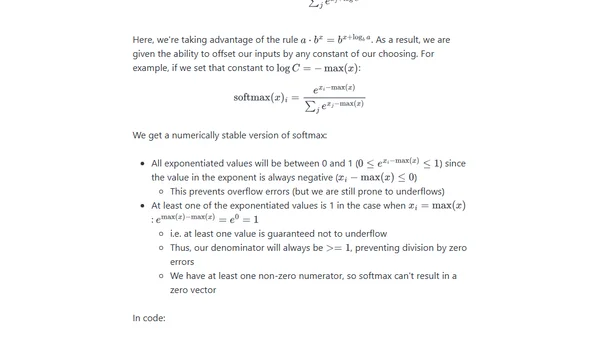

Read OriginalThis technical article delves into the softmax function and cross-entropy loss, key components in deep learning. It demonstrates how naive implementations can lead to numerical overflow/underflow (producing NaN or inf) and mathematically derives stable versions by shifting inputs, ensuring reliable computation even with extreme logit values.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser