The Illustrated Retrieval Transformer

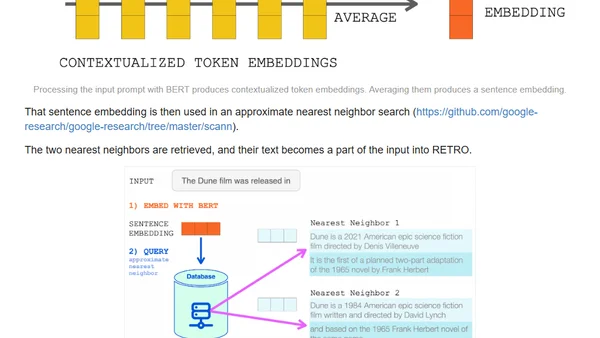

Read OriginalThis article details DeepMind's RETRO (Retrieval-Enhanced Transformer) model, which matches GPT-3's performance using only 4% of the parameters by retrieving information from a database. It discusses the shift from simply scaling model size to augmenting models with external knowledge retrieval, separating linguistic patterns from factual world knowledge to improve efficiency and capability in language models.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser