How to stop Google from scanning my site

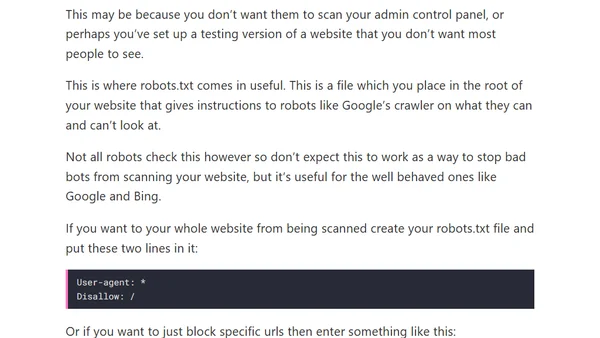

Read OriginalThis article explains the purpose and usage of the robots.txt file to prevent search engines like Google from scanning a website. It covers scenarios like blocking admin panels or test sites, provides basic syntax examples for disallowing all or specific URLs, and clarifies its limitations against malicious bots.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser