An information maximization view on the $\beta$-VAE objective

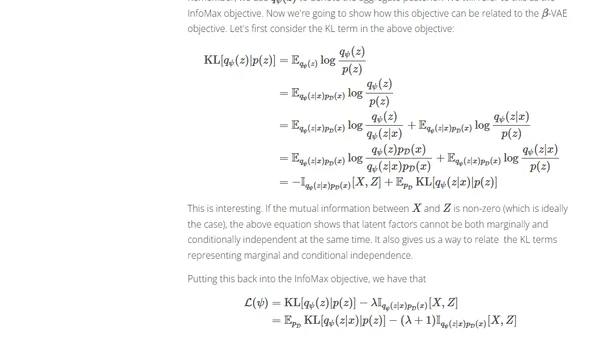

Read OriginalThis article provides a deep, technical analysis of the β-VAE (Variational Autoencoder) objective, deriving it from first principles of information maximization. It explains how the β hyperparameter controls the trade-off between reconstruction accuracy and the KL divergence penalty, and how this encourages the learning of disentangled latent representations by promoting coordinate-wise conditional independence in the posterior distribution.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser