Creating a Github action to detect toxic comments using TensorFlow.js

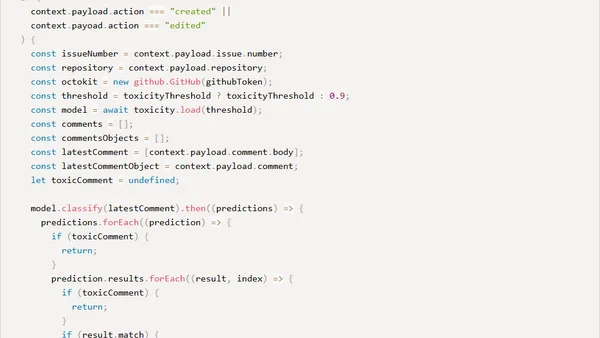

Read OriginalThis technical article details the creation of a custom GitHub Action using JavaScript, TensorFlow.js, and a pre-trained toxicity model. It explains how to set up the action to analyze comments for categories like insults, threats, and obscenity, and automatically flag toxic content with a customizable bot response. The guide includes code snippets for the action.yml configuration and the core implementation logic.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser